In 2009, the Associated Press Managing Editors study on digital news practices canvassed 110 news organizations about what happens when old stories resurface online, when someone googles their name and finds an arrest from a decade ago, a quote taken out of context, or a story that was accurate at the time but no longer is. The results were uncomfortable. Most newsrooms had given almost no thought to it. The concept of unpublishing barely existed as a term.

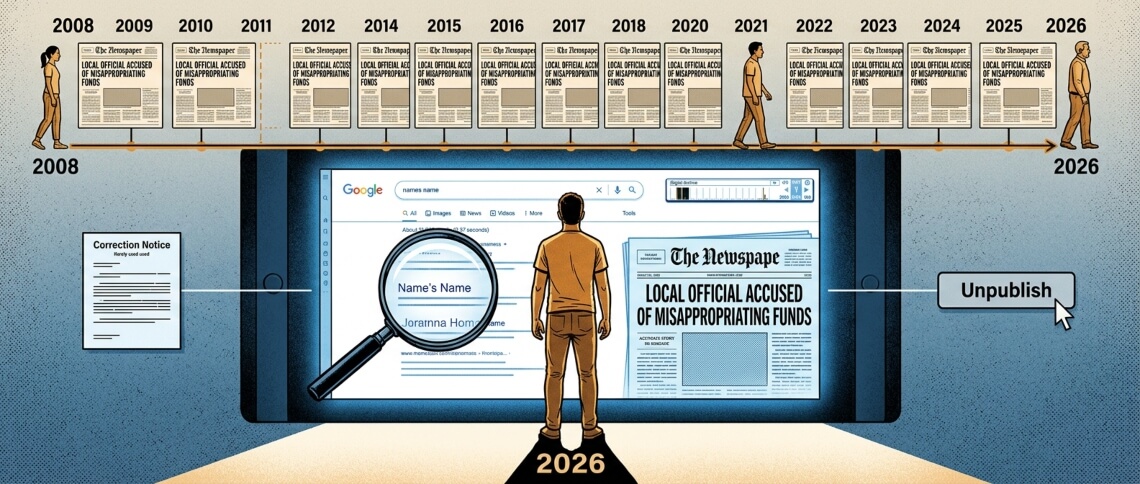

Seventeen years later, the question hasn’t gone away. It has gotten more complicated. Google’s search infrastructure means that a story published in 2008 about a municipal bylaw dispute in a mid-sized Canadian city surfaces just as easily as one published yesterday. The person involved may have moved, changed careers, or simply aged past the version of themselves in the story. The story stays.

Below, we explain what corrections and unpublishing mean, what the research shows about how newsrooms handle them, what frameworks editors use to decide, and where the industry’s documented practice falls short.

80%

of US newsrooms have some unpublishing policy

Deborah Dwyer / Nieman Lab research

78%

of editors say newsrooms should sometimes unpublish

Survey of news organizations, published ABA Journal

~2%

of those policies are shared publicly beyond the newsroom

Same study – the number that stands out

67%

would unpublish when content is inaccurate or unfair

Same survey – a different threshold than the previous figure suggests

The Difference Between Unpublishing and Deleting

Unpublishing is not deleting the historical record. That distinction matters because the two are routinely conflated, by both requesters and journalists. When a newsroom unpublishes a story, it typically removes the URL from public indexing, or anonymizes the individuals named, or adds an editor’s note explaining the change. The Wayback Machine almost certainly still has it. Social media shares of the original still exist. Unpublishing offers obscurity, not erasure.

Deborah Dwyer, who spent years researching this issue as a Reynolds Journalism Institute fellow at the Missouri School of Journalism, defines it as

“the act of deleting factual content that has been previously published online in response to an external request prompted by personal motivations such as embarrassment or privacy concerns.”

That definition is precise. It excludes corrections of factual error, which is a different category entirely, and it excludes takedowns driven by legal threat, which is different again.

The options a newsroom actually has when someone requests removal are wider than most people realize. From least to most interventionist: adding a follow-up story with updated information; appending an editor’s note; anonymizing the individual’s name within the piece; de-indexing the page so it doesn’t appear in Google search results while remaining accessible via direct URL; or removing the URL entirely. Each of those involves a different set of editorial trade-offs.

Nothing is forgotten. In the EU, the information is simply delisted from search engines when the individual’s name is used in the search. This offers a level of obscurity, not erasure.

— Research on unpublishing practices, journalism.co.uk / Deborah Dwyer

The “Long Tail” Problem: Old Stories That Keep Surfacing

The phrase “long tail” comes from economics – the idea that content that attracts modest attention at publication keeps accumulating readers over the years. For news archives, it describes something specific: stories published for an audience of a few thousand people in 2007, dormant for years, now the first result when someone searches a private individual’s name. Kathy English documented this pattern directly in “The Long Tail of News: To Unpublish or Not to Unpublish”, a report commissioned through the Canadian Journalism Project that remains one of the most cited primary sources on the subject.

This is largely a search engine problem, not a journalism problem in origin. Before digitization, newspaper archives existed on microfilm in libraries. Accessing them required physical presence, knowing the approximate publication date, and patience. The historical record was there, but practical obscurity meant that most people could move on from what had been reported about them. That obscurity is gone.

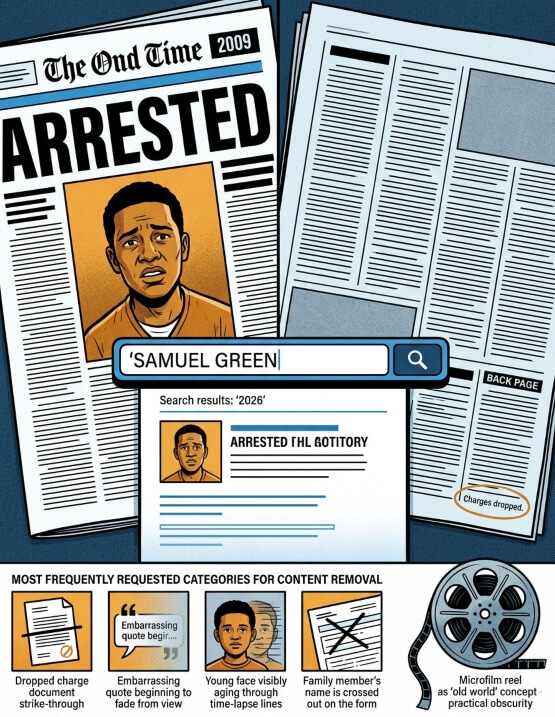

The stories most often requested for removal cluster into predictable categories: minor criminal charges that were later dropped or resulted in no conviction; embarrassing personal situations reported during a period of newsworthiness that has since passed; quotes given voluntarily by people who were young and have since changed their views or circumstances; and names attached to crimes committed by family members, not the subject themselves.

For crime reporting specifically, the structural problem is asymmetry. Arrests are almost always covered. Acquittals, dropped charges, and expunged records are rarely followed up. In September 2016, the Tampa Bay Times formed one of the earliest documented formal committees for handling exactly that gap. Its first decision: anonymizing a story about a woman who had been interviewed about a job with a cleaning service at 19, a story now damaging her career in an unrelated field. Most newsrooms hadn’t formalized a process for making that call.

When Should a Newsroom Unpublish? The Decision Framework

No agreed industry protocol exists. That’s the most honest answer available in 2026. Most newsrooms that handle these requests do so informally, case by case, with decisions resting on individual editors. The Nieman Lab, citing Dwyer’s research, found that while roughly 80 percent of surveyed outlets had some form of unpublishing policy, almost half were not in writing. Only 2 percent were shared beyond the newsroom, meaning the public, the people making requests, had no way to know what standard was being applied.

The gap between what newsrooms say they do and what is actually documented is, in most cases, significant.

The frameworks that do exist tend to organize decisions around a few consistent questions:

| Situation | Standard practice |

|---|---|

| Was the original reporting accurate? | If yes, the threshold for removal is much higher – historical record arguments apply |

| Is the subject a public figure? | Public figures have lower privacy expectations than private individuals; this rarely justifies removal |

| Have charges been dropped, dismissed, or expunged? | Strong case for at minimum updating the story or adding a follow-up |

| Is the person in danger? | Suicidal ideation or credible safety risk: most newsrooms would remove or anonymize quickly |

| Does the story serve continued public interest? | Ongoing public interest (elected officials, institutional conduct) argues strongly against removal |

| How long ago was it published? | Passage of time weighs toward review, but doesn’t override other factors automatically |

In July 2018, Cleveland.com editor Chris Quinn announced what he called a “right-to-be-forgotten experiment” – an email address ([email protected]) where people could request removal of minor crime reports. In January 2021, the Boston Globe launched its Fresh Start initiative, with a committee of 10 journalists reviewing cases. The Bangor Daily News followed the same month. These are the most documented examples in North American journalism. They are also exceptions. The industry has not standardized around them.

Corrections vs. Retractions vs. Updates: Not the Same Thing

These three terms are often used interchangeably. They shouldn’t be.

A correction fixes a factual error. The SPJ Code of Ethics is direct: journalists should “acknowledge mistakes and correct them promptly and prominently.” Reuters’ handbook says the same – errors corrected “promptly and clearly,” never buried in a subsequent story.

A retraction withdraws an entire story because the reporting is fundamentally flawed, not just wrong in a detail. Rolling Stone’s 2014 retraction of “A Rape on Campus” is the most cited North American example; the Columbia Graduate School of Journalism published a detailed analysis of where the reporting failed.

Retractions are rare because the threshold is high. Most major organizations, the SPJ, Reuters, and AP, publish news retraction guidelines, but these focus on the decision to retract, not on what happens after: how prominently to display it, whether to preserve the original, and how long to keep the retraction notice live.

An update adds new information – a follow-up on a court case that concluded after initial reporting. Good practice is a clear timestamp and note explaining what changed. Most newsrooms do this inconsistently. When Bloomberg’s AI-generated summaries launched in 2025 and produced dozens of corrections within days, it illustrated a specific risk: automation can amplify factual errors faster than editorial review catches them.

The distinction that matters most for unpublishing: a story can be accurate and still cause harm. That’s where the corrections policy stops being the relevant framework. Editors handling unpublishing requests are making a different kind of decision – one that existing ethics codes don’t fully address.

If we want to maintain that there is this entity that we call journalism as a profession, then we do have to arrive at a basic understanding of whether altering our archives is okay or not.

— Deborah Dwyer, Reynolds Journalism Institute / Columbia Journalism Review

How Digital Archives Affect Individuals Over Time

Before 1995 or so, a local newspaper story about a person’s arrest existed in a clipping file in a newsroom library and on microfilm in a public library. Finding it required knowing where to look and having physical access. The information was technically public. In practice, it was obscure.

Digital archives changed that permanently. A story published in 1999 about a bankruptcy filing, a 2003 piece about a drunk driving charge, a 2011 article mentioning someone by name in a neighbourhood dispute – all surface in under a second. The people most affected are rarely public figures. They are private individuals whose connection to a news event was temporary: a teenager who committed a minor offence, a bystander to someone else’s story, someone who spoke to a reporter during a crisis they later recovered from.

The European Court of Justice’s 2014 ruling in the Google Spain case established that search engines could be required to de-index certain results about private individuals in the EU – a legal mechanism that doesn’t exist in Canada or the United States, but one that has influenced how newsrooms think about their own responsibilities.

The question of digital archive ethics, who bears responsibility for content that was accurate when published but harmful now, sits at the centre of this problem. Reputation management companies have identified this as a business opportunity, actively soliciting people named in old stories and charging fees for services that range from legitimate (submitting requests to newsrooms) to questionable (SEO manipulation to bury results). Some unpublishing requests now arrive via intermediaries, which raises questions about the original motivation and whether the newsroom is being used as a tool in a broader reputation campaign.

What Major Newsrooms Actually Do

The documented examples are mostly American, which reflects where research attention has focused. Canadian newsrooms have largely addressed this on an ad hoc basis without published policies.

The Boston Globe’s Fresh Start initiative, launched in January 2021, is the most structured program in North American journalism. A committee of 10 journalists reviews requests from individuals named in crime-related coverage. Outcomes range from anonymization to de-indexing to editor’s notes. The Globe specifically cited the impact of past crime coverage on communities of colour as part of the rationale, and paired the initiative with a broader rethinking of how the newsroom covers certain crimes going forward, meaning the policy looks backward and forward simultaneously.

The Bangor Daily News launched its program the same month. The Cleveland Plain Dealer’s [email protected] address predates both, from 2018. The Gazette in Cedar Rapids uses an online form. Four named examples with documented, public policies. Most newsrooms handle requests without any of this – a phone call, an email to an editor, a decision made and not recorded.

The absence of documentation is itself a choice. Without written standards, there is no consistency, no accountability, and no way for the public to understand what standard was applied to their case. That’s not a comfortable position for an industry that stakes its credibility on transparency.

FAQ

No. Canada has no legislation equivalent to the EU’s GDPR right to erasure. The right to be forgotten in journalism contexts exists here only as an editorial choice, not a legal right – newsrooms decide case by case whether to anonymize, de-index, or remove content. The Personal Information Protection and Electronic Documents Act (PIPEDA) does not require news organizations to delete published content.

Not under Canadian law, unless the story is defamatory and you obtain a court judgment to that effect. Newsrooms can choose to unpublish a news article voluntarily. Many will consider requests, particularly where charges were dropped or the content is significantly outdated. There is no standard process across outlets.

De-indexing removes a page from search engine results, so it doesn’t appear when someone searches by name, but the URL still exists and is accessible via direct link or services like the Wayback Machine. Deleting removes the URL entirely. De-indexing is more common because it addresses the practical problem of search visibility without altering the archive.

Most do not publish them. The Toronto Star, Globe and Mail, and CBC do not have documented, publicly available unpublishing frameworks comparable to the Boston Globe’s Fresh Start initiative as of 2026. Requests are handled on a case-by-case basis internally.

At minimum: a visible online corrections policy page with a clear submission process; a stated commitment to prompt and prominent corrections; and a distinction between minor factual corrections, significant factual errors, and retractions. The SPJ Code and Reuters Handbook both address this, though neither covers unpublishing directly.

⚠️ Disclaimer: This page is for informational purposes only and does not constitute legal advice. Laws and newsroom policies vary by jurisdiction and organization. Consult a qualified media lawyer for specific situations.